#43 — Why The Demand for Systems Thinking Isn’t Going Away

There’s No Reason Turning Abstract Problems into Concrete Solutions Would Decline Now

Reflections

Programming Throwdown Is Back with an Episode on Agentic Coding

There’s a term that’s been gaining a lot of traction lately: Agentic Coding (systems that don’t just respond, but take action, executing complete processes with little to no human intervention). Major companies are already deploying these agents in real-world operations, making the topic impossible to ignore.

That’s exactly why the latest episode of Programming Throwdown is worth paying attention to. Patrick Wheeler and Jason Gauci return for a deep dive into agentic coding, where it came from, how it works under the hood, and what it really means to use these systems seriously in modern computing.

A Brief History of AI-Assisted Coding

IntelliSense has been around for decades (deterministic autocomplete based on the functions and types in your codebase). Then came GitHub Copilot, which uses LLMs to suggest entire blocks of code, not just symbol names. Cursor pushed things further by introducing multi-location tab completion: you accept a suggestion, and the tool flags follow-up work elsewhere in the file (imports to add, signatures to update…) so you can tab through a chain of related edits.

Then Claude Code arrived and changed everything. Instead of extending what you had partially written, you could describe what you wanted in plain English, and the agent would go off and do it. Jason has never written a single line of machine code in his life; he’s always run everything through a compiler. Passing instructions through an agent instead of writing code directly isn’t categorically different, he says.

As I read a few months ago from Kevin Naughton Jr.:

If you look at the history of computing, this isn’t a radical departure; it’s actually a natural progression. We started with binary and punch cards, moved to assembly, then to low-level languages like C, and eventually to high-level languages like Python and Java. Each step was about adding a layer of abstraction to make us more efficient. Communicating with computers in English is just the next logical layer.

Knowledge is King, as Kevin likes to remind us:

You must maintain an intimate understanding of how your systems work. If you don’t understand the underlying logic, you are only as good as the LLM you are leveraging. AI can handle the labor, but you must provide the oversight. Adopting the tools is mandatory, but maintaining your expertise is what makes you a 10x engineer rather than just a 10x typist.

What “Agentic” Actually Means

When the model receives a question, it doesn’t just emit text, it can invoke tools: run a linter, read a file, apply a patch, or execute a Bash command. The tool’s output is added to the context, and the model uses that to decide what to do next: call another tool or finally answer.

What makes it agentic is that this loop can recurse. Jason walks through a concrete example: ask it to remove all lint errors from a codebase, and it runs the linter, sees ten thousand errors, and, rather than trying to fix everything in one enormous diff, creates subtasks. Each subtask focuses on a narrow class of error and runs as an isolated sub-question. The original model becomes an orchestrator. Each subtask runs with a smaller, more focused context, which matters because, as both hosts emphasize, model quality degrades as context grows. A model working on one chapter of a textbook produces a better summary than one trying to hold the whole book in memory at once.

The loop also makes eventual correctness possible. If the model hallucinates a method call that doesn’t exist, it can try to compile, catch the error in the tool output, and correct itself without any human intervention. Patrick notes that this is still imperfect around the edges, but the architecture is what enables recovery at all.

The Context Window Problem

Both hosts spend significant time on this because it’s the most consequential practical constraint. Every tool call result, every model response, every file chunk. All of it accumulates in the context. When the model approaches its limit, it compacts by summarizing older parts of the conversation to free up space. The problem is that summarization loses detail, and in code, details matter. Jason describes situations where, after compaction, the model repeated a question it had already asked. The thread of reasoning had been compressed away.

The mitigation is task decomposition: break large requests into smaller, focused subtasks. Each subtask runs in a fresh, narrow context. The model is smarter, faster, and less likely to go sideways. This isn’t just a technical workaround; it’s a discipline that produces better results structurally, not just computationally.

AGENTS.md and Code Hygiene

Most agentic tools look for a file called AGENTS.md in your project directory, recursing up the file tree to collect all of them. Whatever you put there gets prepended to every session. This is where you encode standing instructions: always run the unit tests after significant changes, use the virtualenv Python rather than the system one, keep files under a certain size, and break them up semantically if they grow too large.

Jason learned this last rule the hard way. He told the agent to split a large file when it got too big and ended up with cube_part1.py and cube_part2.py. He had to go back and add explicit instructions: splits must be semantically meaningful, not just mechanical. The AGENTS.md file is where you enforce good habits so you don’t have to repeat them, and where you gradually accumulate the lessons the agent would otherwise keep needing to relearn.

What Still Goes Wrong

Beyond context limits and compaction, there are still hallucinated API calls. The model assumes a method exists because the class pattern suggests it should. There’s also a tendency toward spaghetti: agents will happily duplicate logic rather than refactor it into a shared function, because deduplication requires a kind of global awareness that’s expensive in tokens. You can push back on this in AGENTS.md, but it requires active enforcement.

There’s also the question of when to push forward versus when to start over. Sometimes something in the context window pollutes the model’s reasoning, and it gets stuck in a loop. Reverting to an earlier Git commit or simply closing the session and restating the question with fresh framing often works better than trying to argue the agent out of a bad state. And since there’s randomness built into the model, temperature and other factors, you’re not guaranteed to get the same answer twice, even from the same starting point, which means a fresh attempt isn’t wasted effort.

Git, they argue, isn’t optional here. Use it even on solo projects, even if you never push to a remote. It gives the agent something to revert to, makes rollbacks cheap, and produces detailed commit messages that capture why something was done, context that won’t be inferable from the code alone.

On the Future of Software Engineering

Neither host thinks the job of software engineer disappears. The demand for systematic thinking, turning abstract problems into concrete solutions, has never declined historically, and there’s no obvious reason it would now. What changes is the nature of the work, as well as the profile and role of the software engineer. Rote porting tasks, mechanical boilerplate, the kind of work where the spec is clear and the output is predictable, are the most vulnerable. What remains is judgment: knowing what to build, recognizing when something is wrong, understanding why one architecture holds up under load while another doesn’t.

Jason makes a strong point: Salesforce isn’t just a database with a front end. It’s a decade-plus of learned lessons, role-based access control added after an intern wiped a client’s data on their last day, audit trails added after something went wrong that no one anticipated. That institutional knowledge isn’t in any GitHub comment. An agent can reproduce the surface, not the scar tissue. Companies that cancel their SaaS contracts to rebuild in-house are, in his view, setting themselves up for a round of security incidents before quietly returning.

Patrick’s framing: curiosity, taste, and the persnicketiness to keep going when things don’t match expectations. Those are the traits that will matter. The lines between disciplines may blur as engineers reach further outside their domain, but the difference between someone who builds something people actually want and someone who just ships tokens to disk will remain.

Teaching Faculty Hiring

My co-advisor, Michael Hilton, was on my favorite academic podcast, The CS-Ed Podcast, discussing a CRA memo he co-authored: Best Practices for Hiring Teaching Faculty in Research Computing Departments. What a great combination.

These ideas got me thinking:

Michael shares his own path from community college at Grossmont College, to transferring to San Diego State University, to nearly a decade as a software engineer, then returning for a master’s at Cal Poly San Luis Obispo and a PhD at Oregon State University before becoming a teaching professor at CMU.

Unlike tenure-track hiring, teaching-track processes and norms are often reinvented at every institution, forcing candidates to prepare entirely different materials for every search. Michael explains that, while tenure-track hiring benefits from widely understood community norms, teaching-focused positions often lack that shared structure, creating confusion and unnecessary burden for both candidates and departments.

From there, they walk through the hiring process step by step:

Writing job ads that don’t assume candidates can read between the lines about degree requirements, prior industry experience, contract length, long-term job security, or even what “professor of practice” is supposed to mean at a given institution.

Building evaluation rubrics before candidates arrive so mixed research-and-teaching committees align on what actually matters for the role, rather than evaluating candidates through incompatible expectations. They also discuss how rubrics reduce bias and help departments assess candidates based on the realities of the job itself.

Rethinking the job talk format so it reflects real teaching conditions rather than a faculty audience pretending to be students. Michael makes a compelling case that the traditional “teach faculty as if they were undergraduates” model often produces artificial dynamics: some faculty disengage because they already know the material, while others turn the session into a search for edge-case gotcha questions. Instead, he argues for more meta-level discussions about how candidates would actually structure and teach a course.

Structuring interview visits in ways that help both sides assess fit accurately, including letting candidates sit in on a live class so they can see what teaching at the institution actually looks like without having to prepare an entirely new lecture.

They also discuss the mismatch between fall and spring hiring cycles, which creates a particularly painful situation for teaching-track candidates navigating very different institutional timelines. Teaching-focused positions at liberal arts colleges and teaching-centered institutions often hire in the fall, while many R1 institutions conduct searches much later in the spring. As a result, candidates are frequently forced to make major career decisions before they have the chance to evaluate the full range of opportunities available to them. This becomes even more difficult for dual-academic-career couples, especially when one partner is on the traditional tenure-track market and the other is pursuing teaching-focused roles. In many cases, teaching-track offers arrive months before tenure-track interviews and offers even begin, making coordinated decision-making nearly impossible.

They also discuss the use of exploding offers as a pressure tactic. Short-deadline offers designed to force candidates into accepting positions before they can complete other interviews or compare opportunities. Michael argues that this dynamic disproportionately affects teaching-track candidates because of the fragmented timelines and lack of shared hiring norms across institutions.

One especially interesting point is how some R1 institutions are now experimenting with both fall and spring hiring cycles after repeatedly losing strong candidates who had already accepted offers elsewhere before the R1 hiring process had even started.

While I recommend reading the full memo, since it’s full of practical guidance, this episode adds the stories and nuance that make clear why getting this right matters for departments trying to find great candidates and for candidates trying to find the right fit.

I agree with Kristin that, regardless of where you are in the hiring cycle, this is an important episode. Michael goes into details that don’t appear in the memo and really highlights why it’s worth spending a little extra time to make hiring better each time around.

Quote from Michael that I liked:

I always tell my students who are going on the job market that you’re interviewing them as much as they’re interviewing you.

What skills do people need to successfully code with AI? CS + writing skills

Programming without writing a single line of code: this is the promise of vibe coding. A new study by Sverrir Thorgeirsson, Theo B. Weidmann, and Professor Zhendong Su from ETH Zürich examines which skills are needed to build simple apps with AI agents.

The results show that participants with stronger computer science backgrounds achieve better outcomes. Writing ability also has a clear impact, while frequent everyday use of language models does not improve performance.

The work highlights the role of human expertise in prompting, critical assessment, and system understanding. It shows how computer scientists shape effective collaboration between humans and AI.

🔍 Resources for Learning CS

→ If LLMs can generate the code, what’s left for you?

In this keynote, Vicki Boykis argues the answer is everything that actually matters and none of it comes quickly: data sense, system design, and the hard-earned intuition to recognize when things are wrong.

→ The Laws of Software Engineering book is out

This new book by Milan Milanović distills more than 20 years of hard-earned software engineering lessons into 56 laws covering architecture, teams, quality, scalability, and decision-making. It connects ideas such as Gall’s Law, Brooks’ Law, Goodhart’s Law, the Two-Pizza Rule, and the Cobra Effect through real-world industry experience.

→ Allocating on the Stack

This article describes allocation optimizations in the Go compiler. Even if you don’t write Go, these detailed articles are a great read. Speaking of Go, Burak Karakan argues that Go is unusually well-suited for AI coding agents..

🔍 Resources for Teaching CS

→ Python IDEs for teaching

What IDEs are people using nowadays for introductory Python courses, especially now that tools like PyCharm have started shipping increasingly aggressive AI-assisted code completion?

This week I’ve been gathering some interesting projects from the current ecosystem of educational Python IDEs and browser-based programming environments:

TigerPython: minimalist browser-based Python IDE running fully client-side with Pyodide.

TigerPython Parser: open-source localized error-message system.

BlockPy: dual block/text Python environment with tracing, datasets, autograding, and browser execution

BlockMirror: block ↔ text synchronization editor.

CORGIS datasets for education.

Pedal autograding framework.

Drafter educational web library.

Strype: structured editor between blocks and text.

Hedy: gradual programming language for education.

Wordplay: multilingual educational programming environment.

Other tools:

There’s also an emerging tension between professional tooling and pedagogical tooling. The “best IDE for software engineers” is not necessarily the best IDE for someone learning loops, state, functions, or debugging for the first time.

Really interesting space right now for computing education, and thanks for your contributions: David Reed, Kevin Lin, Cory Bart, Tamara Nelson-Fromm, Leandro Silva Galvão de Carvalho, Manuel A. Pérez-Quiñones, Nicholas Weaver, Ken Arnold, and Neil Brown.

→ Distributed Systems lecture series

Martin Kleppmann (mentioned today in the industry section) published an awesome free Distributed Systems lecture series some time ago. If you’re teaching it in Fall 2026 or Spring 2027, it’s a great way to review some of the most important topics.

🦄 Quick bytes from the industry

→ Great episode with Martin Kleppmann

What a great episode of The Pragmatic Engineer Podcast with Martin Kleppmann, the author of the Designing Data-Intensive Applications book, academic and researcher:

Martin Kleppmann argues that academia and industry are not opposing worlds, but complementary environments with different incentives and time horizons. In academia, researchers have the freedom to pursue long-term ideas that may not be immediately commercially viable, such as his work on “local-first software,” which aims to give users more control over their own data instead of locking them into cloud platforms. He sees this as an opportunity to prioritize what is best for users rather than what maximizes business incentives.

It’s wonderful to get to work on interesting engineering and computer science problems while at the same time, like trying to pursue this higher level vision.

Kleppmann also reflects on teaching and the Cambridge system, which he describes as traditionally theoretical and heavily focused on fundamentals rather than rapidly changing technological trends. He notes that computer science education at Cambridge still emphasizes ideas that have remained relevant for decades, such as distributed systems principles and formal reasoning, instead of constantly chasing the latest industry fashions. His own teaching style mirrors this approach: lectures are often theoretical and based on careful reasoning about algorithms, failures, and trade-offs, though he also values hands-on engineering experiences in areas like cryptographic protocol implementation. He appreciates that this environment encourages students to think rigorously from first principles rather than simply applying fashionable tools uncritically.

At the same time, he values practical engineering and believes academia should stay connected to real-world problems. He argues that industry often moves too quickly and relies on trends or secondhand ideas without deeply reasoning through trade-offs, while academia encourages more rigorous and first-principles thinking. Ideally, people should move between both worlds throughout their careers, since industry experience gives researchers broader perspective, and academic training develops critical thinking that can improve engineering practice.

On AI, he sees AI tools as potentially very useful for feedback, brainstorming, and software development, but believes some forms of learning and thinking still require direct human effort. For him, writing is part of the thinking process itself, which is why he still writes his books manually. In education, he thinks AI creates a difficult challenge: universities want students to learn how to use these tools responsibly, but also need to preserve the intellectual struggle that is essential for real learning.

→ I loved the conversation with Félix Ruiz on the Tengo un Plan podcast

I recommend this episode of “Tengo un Plan” if you’re into startups and entrepreneurship. The title is a bit misleading. It’s not about easy money or get-rich-quick schemes, but about the story of Tuenti (among others). So nostalgic! And Félix Ruiz really had great vision.

🌎 Computing Education Community

Shuchi Grover (May 12): Raspberry Pi seminar on K–12 data & computing competencies and AI integration across the curriculum. Pre-register for this seminar on Zoom.

Marie Devlin seeks instructors for a 10–15 min survey on GenAI’s impact on capstone/team projects.

Amanda Holland-Minkley (MAP-CS) invites faculty to a 10-min survey on curriculum development and review processes.

The Citadel is hiring a tenure-track Assistant Professor or Instructor in Cyber Operations. Teaching-focused role with strong cyber labs, NSA CAE designation, and start dates across 2026–27 in Charleston, SC.

The Computer Graphics & Animation Research Projects (CG&ARP) program returns May 18 with a 6-week international research experience pairing undergrads, grad mentors, and faculty on graphics/animation projects.

EngageCSEdu released a special issue on game-based learning and serious games in computing education.

EduCHI is seeking expressions of interest to host EduCHI 2027 and 2028 following its addition as a SIGCHI-sponsored conference. EOIs due May 31. Shared by Olivier St-Cyr.

SIGCSE Virtual 2026: Round 1 deadline today (11:59 p.m. AoE): Panels, Papers, Special Sessions, and Working Groups.

🤔 Thought(s) For You to Ponder…

Last week, while talking with a Brazilian friend, we got into a discussion about Brazil’s Bolsa Família program. Last Friday, reflecting on the dignity of work in light of the Feast of Saint Joseph the Worker, I’ve been thinking more about government assistance. The dignity of work reminds a person that they are a child of God, with abilities, talents, and a purpose. If a government tries to support everyone primarily through subsidies, it can end up demeaning and humiliating them. There’s a difference between offering help and creating dependency. At its core, that kind of dependency can resemble a form of slavery. When a government does this, it is not truly promoting the social good; instead, it risks building a system of dependency aimed at keeping itself in power.

Anthropic is building an A+ team:

I’ve been wondering what day-to-day AI adoption looks like in companies. In my experience, getting an entire organization up to speed on any new tool or software is a huge challenge, but with AI, I’m not so sure. One question I’ve started asking friends at different companies is whether their organizations are actually integrating AI into their operations and if so, whether the benefits show up more in efficiency and productivity or in direct financial results, and whether it’s mostly being used for pilot projects or for things that have actually made it into production.

I appreciate Hadden Turner’s reminder about why we need more, not less, friction in our lives:

We easily forget that friction isn’t always bad; in fact sometimes it is essential.

Reinstating good and necessary friction into our lives, making them frictionful in ways that direct us towards the good life rather than making them a frictionless path towards greater industrialisation, is one of the greatest needs of today.

What we need is not what we want; and what we want is not what we need.

I’d say it’s not that Claude Security can find vulnerabilities that would be impossible for a person to detect, but rather that it can uncover, at scale, issues a person could have found. Just not likely in time.

Reading Tim’s letter. Really strong, both in substance and in how it’s written.

I have no idea whether Claude Design is eating Figma’s lunch, but this article by Martin Alderson got me thinking: it’s not necessarily the company with the best standalone product, the most loyal community, or the most polished interface that wins anymore. It’s the one that controls the model, the compute, and the distribution. So could Figma end up getting absorbed into the workflow of a conversational interface like Claude?

Pepe Cruz Novillo has passed away. A legend of Spanish graphic design. RIP.

Gerard García from Deale talks with Joan Tubau about judgment and criterion in financial analysis. He points out that AI will automate a large portion of the work, but stresses that it’s still essential to know what you’re trying to achieve, to pick up on subtle nuances, and to have enough knowledge to judge what’s right and truly understand what the AI is producing. He adds that AI will make analysts more productive, allowing them to focus on higher-value tasks. At the same time, it also requires people to learn much faster. On the flip side, there’s a risk of losing some of the expertise that traditionally came from starting with a blank page or challenging assumptions. He also highlights that AI is leveling the playing field, since even small and mid-sized companies can now access advanced tools. They also discuss the situation for college students and how the way they gain experience is changing, as some traditional paths like heavy Excel work or entry-level roles are fading, even though they used to help build a professional mindset. This leads them to place more importance on curiosity in education, learning by observing others, and committing to continuous learning. They conclude that there’s no need to panic, as new types of jobs will emerge.

I enjoyed this take from Sara Perla on praying to St. Joseph and asking him for a husband. Definitely worth a read.

The only reason I can keep trusting that Joseph lives in heaven and intercedes for me is that I have people in my life who are a bit like him. People who do things silently but who mostly keep their thoughts to themselves. They mail packages to me when they know I’ve had a rough time. They text me funny memes or offer to pick up coffee for me. Their kids start calling me “Aunt Sara” even though no one told them to. They may not share a lot of what they are thinking or feeling at a given moment — apparently, I do enough of that for all of us — but they are present. They are there. And so is St. Joseph.

St. Joseph has been silent when I have asked for his help in finding a husband, but he has not been entirely silent — he has supported me when I needed it, in ways that I did not know to ask. Just like any true friend.

It’s always a joy to hear from the Sisters of Life. This time, they teamed up with Matt Fradd to respond to real Reddit posts about crisis pregnancies and abortion. They emphasized the importance of listening first, offering practical support alongside spiritual care, and reminding women of God’s mercy and the Church’s healing resources. They also shared stories of women who found healing, reconciliation, or unexpected support after choosing life, and pointed listeners to resources like sistersoflife.org, Rachel’s Vineyard, and John Paul II Healing Center.

The profession of being an airline pilot. Ramon Vallès explains it brilliantly:

I make it a habit to say goodbye to all my passengers at the door when the flight ends. It’s my way of keeping the human side of my job alive. The best part of what I do is getting to carry people from one place to another.

My university’s Newman Center newsletter shared this talk on discerning the vocation of marriage on Sunday. You might be interested in attending if you’ll be in Houston at the end of May.

The memory shortage isn’t just threatening the smartphone and laptop industries. It’s hitting research labs too, Heidi Ledford reports for Nature. Major memory manufacturers (Samsung, SK Hynix, Micron, and others) are shifting their production toward data centers and AI chips, where profit margins are higher. Meanwhile, big tech companies building AI models are buying up massive amounts of memory for servers and GPUs.

Tools change all the time, but if you’re curious, these are the ones the Vercel design team is using right now, as shared by Hannah Hearth.

I really liked the conversation between Marta García Aller and Carlos Alsina about the importance of listening.

I loved this line from Enrique García-Máiquez: Admiration isn’t identification, but a recognition of talent and integrity.

I think, without even meaning to, one of my favorite Christian singers, Santiago Benavides, got me thinking about just how incredibly important a team is, not only in research, but in industry as well:

For me, the most important thing isn’t just playing with musicians. It’s playing with friends (who also happen to be incredible musicians). Honestly, I feel like if these songs resonate at all, it ‘s largely because they come from that deep kind of friendship.

Jorge Galindo writes about how AI is reshaping work and, more broadly, HCI here:

Fernando Arancón, Alba Leiva, and David Gómez always surprise me. Now, with an episode about the Tuareg people. A very interesting topic.

Katie McGrady has become my go-to source in English whenever something happens in the Catholic Church. Her analysis resonates with me every time I listen to or read her.

I read this article about how Alejandro Piad, whom I deeply respect, is currently using AI in his work as an academic/ researcher. In case you’d like to explore further:

Very nice piece by Leonardo de Moura: When AI Writes the World’s Software, Who Verifies It?

The goal is a verified software stack: open source, freely available, mathematically guaranteed correct.

Great read. Olivier Girardot:

Sometimes we want the boring stuff to stay boring.

My favorite terminal Warp is now open-source:

📌 Research Corner

I asked my students who didn’t use AI why they chose not to on an assignment that had an AI assistant built into the sidebar, and here are the patterns I noticed:

Workflow preference / low perceived difficulty: they used external tools (like VS Code) but not the in-platform AI.

Intrinsic motivation: the goal was to solve it on their own, not just get to the answer.

Independence-first approach: they would’ve used AI if they’d gotten stuck, but they didn’t need to.

Access constraint, not a deliberate choice: their independence was externally imposed.

What if your SQL query returned a chart instead of a table? ggsql adds visualization clauses directly to SQL, turning analysis and plotting into a single step. It’s still early and it was a bit of a pain to install but the workflow shift is interesting. I’ve been testing it with my current project!

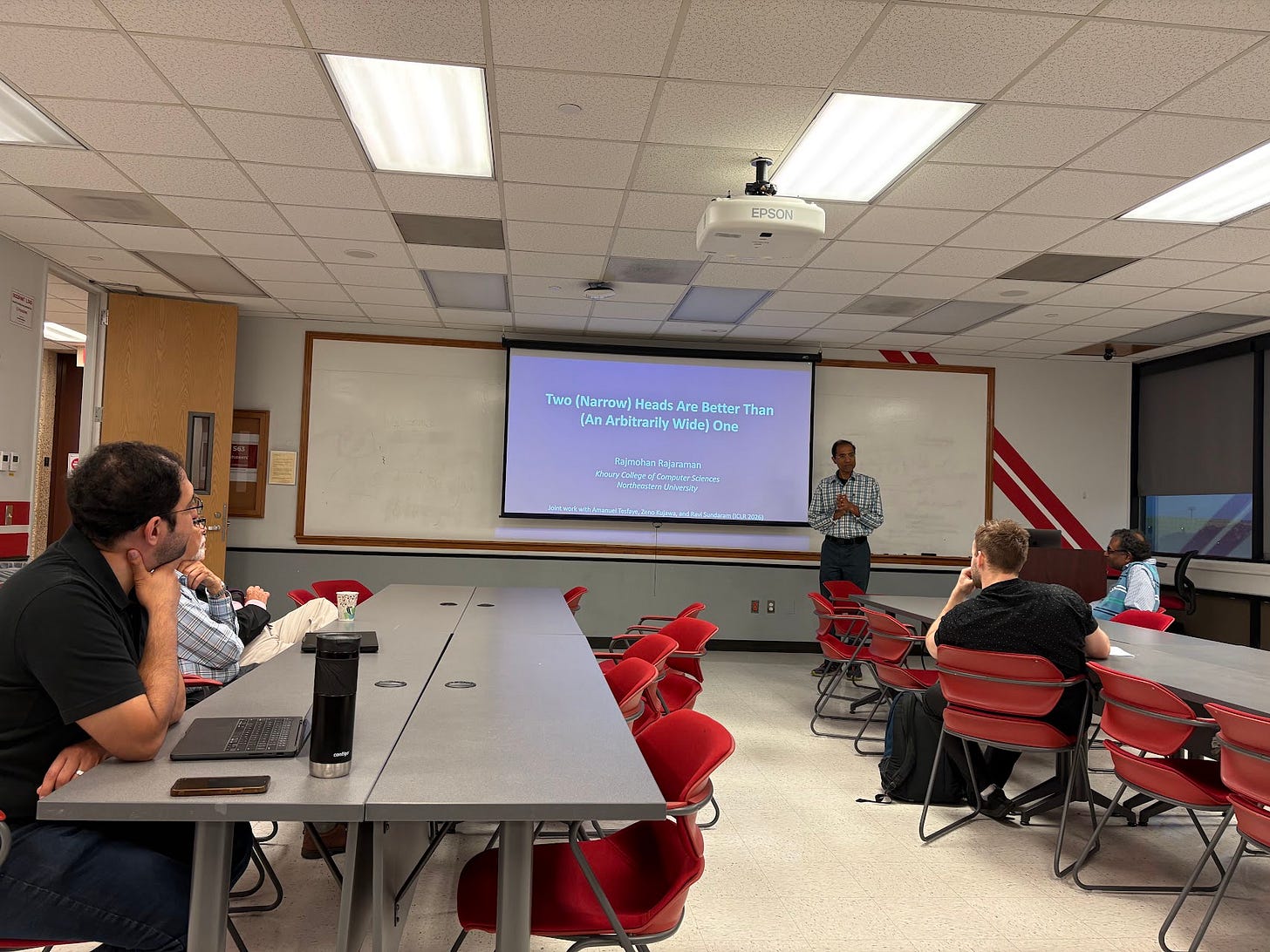

I attended a seminar last Friday given by Dr. Rajmohan Rajaraman from Northeastern at UH. I left understanding a little more about the dense world of transformers. The contributions of some faculty in the room made the session even more stimulating. It was worth coming to campus on that gray and rainy day in Houston.

Waymo’s self-driving cars still rely on human support behind the scenes when the AI runs into complex or ambiguous situations, which I imagine happens pretty often in real urban environments. I like this example because it highlights that you can’t fully remove the human in the loop from the process (think grading in education).

I’ve been reading Nicholas Gardella’s PhD dissertation, Responsible and Equitable Use of AI Code Generators in Computer Science Education. It’s also available as a free audiobook. I especially liked that it explores questions of trust, well-being, and how AI is changing the experience of learning programming. It also compares human-human with human-AI pair programming in a really interesting way. Congratulations on landing a tenure-track Assistant Professor position in Computer Science at Washington and Lee University after your graduation, Nicholas!

This weekend I want to bid for SIGCSE Virtual 2026 papers. I’ll be part of the reviewers!

🪁 Leisure Line

Catching up with Minh and talking about HPC, university, AI, and life was a perfect start to the week.

Cheered on St. Thomas varsity rugby as they took on Celina in the finals at Strake Jesuit. They ended up losing, but it was a great game all the way to the end.

Last few days in Houston before heading back to Europe for the summer next week. Just enough time to catch up with Edu. Thanks for the great conversation, my friend!

Beyond the regulations, the clipping, and the whole hybrid side of things, the Miami GP was genuinely entertaining and fun to watch. It definitely improved the show. There were battles all the way to the end, position changes, and plenty of on-track action (”more driver, less software”). As for the race itself, Max Verstappen was right on the limit, but the guy is insane. He’s still firmly in the fight. It’s a joy to see the top four teams so close together. The big surprise Antonelli, who looks incredibly solid for someone with his level of experience, and Russell, who you definitely can’t count out yet. Colapinto delivered an incredible performance; also happy to see Carlos and Albon score points. Glad for Fernando too, and for both Aston Martins finishing the race actually battling on track a bit. I can’t imagine the pressure they’re under.

📖📺🍿 Currently Reading, Watching, Listening

I’d been meaning to read Confessions by St. Augustine for a while, but kept putting it off. I finally started getting into it through this audiobook, and it’s really winning me over. It feels like it came at just the right time.

A wonderful short film created by the team behind Papirola. This group of animators is on a roll right now and ranks among the very best in Spanish animation in recent years.

This song, which ties into today’s quote, got me thinking about the difference between happiness and peace: As I heard Raimo Goyarrola say not long ago, I don’t really know what happiness is; I’d rather ask: “Do you have peace in your heart? Are you living in peace?” Are you happy? I mean, think about an action movie… it ends, and that’s it, it’s over. Sure, I was happy for a moment, but is that really happiness? I’m not so sure. Now, peace. I do know what that is: living in peace.

💬 Quotable

Happiness is only experienced in moments, and you don’t realize you had it until it’s already gone.

― Gabriel García Márquez

🌐 Cool things from around the internet

A collection of links to stuff I think are worth sharing.

🔗 Isadecuppis work — few designers have the level of taste that Isabella De Cuppis does.

🔗 White Circle — control your AI.

🔗 AGENTS.md — a simple, open format for guiding coding agents.

🔗 Pi Coding Agent — a minimal terminal coding harness.

🔗 Web Rewind — an interactive journey through 30 years of the web.

🔗 Detail — your codebase is full of bugs.

🔗 p5.js — a friendly tool for learning to code and make art.

🔗 Margins — a beautifully designed reading companion for people who love books.

🔗 Landsat — your Name In Landsat.

🔗 BookRadio — it collects audiobooks that are in the public domain.

Issue #43 of Computing Education Things was written while listening to:

🔗 Quick Links

🎧 Listen to Computing Education Things Podcast

💌 Let’s talk: I’m on LinkedIn or Instagram

If you’re enjoying Computing Education Things, please like, comment, or share this post! You can also support this work through Buy Me A Coffee. And if you’re finding value in the newsletter, consider forwarding it to a friend, colleague, or family member to help the community keep growing. Thanks for reading!