#44 — How to keep people engaged for hours

Memorable moments, cognitive anchors, motivation as content, and not being boring

Reflections

David J. Malan is a Harvard professor best known for taking the online CS50 course to another level. Ryan Peterman interviewed him, and they talked about CS50, relevant skills, engaging classes, and the impact of AI on computing education:

I’ll focus on three of the topics they discuss in the conversation:

How to Make Long-Form Lectures Engaging (Online and In-Person)

From a pedagogy standpoint, CS50 is a three-hour class that people watch online, which means students have absolutely no obligation to stay. And yet they stay, and more importantly, they remember it. But this doesn’t only apply to remote learning, it works in person too. Why?

Malan attributes it to what he calls memorable moments. The phone book example illustrates this well. He tears a phone directory in half to demonstrate the efficiency of binary search. Almost nobody today uses a physical phone book anymore, and yet the example still works because it translates an abstract algorithm into a physical gesture. The irrelevance of the object itself doesn’t matter because the structure is analogous, and that is enough.

He says the goal is not entertainment for entertainment’s sake, nor is it simply about engagement or keeping people’s attention, although there is definitely a performative element to avoid boredom. The deeper goal is to create cognitive anchors: things students can latch onto in their memory.

The brain retains information better when it is attached to a concrete image, a physical event, something that breaks the monotony of abstraction. When a computing student sees an older student physically acting out the bubble sort algorithm on stage, they suddenly have somewhere to place the concept. Later, when they forget the formal definition, they may still remember the image. And from that image, they can reconstruct the definition.

What Malan is really doing is teaching through metaphor. These structures allow students to understand something new in terms of something already familiar, such as using physical activities to demonstrate sorting algorithms and help students build intuition for how those algorithms work. Each of these gestures communicates the same underlying message: “This concept that seems arcane is actually something you already understand, just under a different name.”

They also discuss the tradeoffs of this method and the tension between theatricality and content density. If you spend time making binary search memorable, perhaps you are sacrificing the amount of material you could otherwise cover. Students with prior experience notice this sometimes, and some even express frustration about it. Malan’s position is that the tradeoff is worth it because the goal is not to maximize the number of concepts transferred, but to maximize the number of concepts that actually stick.

A dense lecture with no interaction that nobody remembers has a worse pedagogical ROI than a more memorable lecture that creates durable cognitive anchors.

Finally, Malan admits that much of his energy on stage comes from not wanting to stand in front of a bored audience. He genuinely cares about what happens in the room. Motivation itself becomes part of the content. The hours students will spend later that week working on problem sets require fuel. That means the lecture is not just a moment of instruction, it is also a moment of emotional contagion. If students leave a three-hour lecture without wanting to explore the material on their own, then the lecture failed, even if they technically understood everything that was explained. That is the real goal: for students to walk back to their dorms excited to work on their problem sets. That enthusiasm, that sense of passion is not an accident or simply a personality trait. It is the result of a class intentionally designed to produce it.

The knowledge that will matter most in the future

What’s the point of learning C or pointers if you’re never going to use them in production? For Malan, the answer is epistemological. The distinction he draws is between using and understanding. A software engineer who does not understand the layers of abstraction they work with is not a complete engineer, because they are operating tools without understanding the machinery underneath.

What Malan is really arguing is that the knowledge that endures is not knowing how to implement a hash table in C, but having built one yourself and understanding why it exists, what problems it solves, and what tradeoffs it involves. That experience of building something from scratch, even if you never do it again, shapes an intuition that for example helps you understand higher-level languages later on. It is the difference between someone who knows that dict exists in Python and someone who understands what lies beneath that single line of code.

What CS50 is trying to produce is not merely programmers, but engineers and citizens capable of reasoning from first principles. The language itself is always an implementation detail. What matters is the ability to decompose problems, diagnose symptoms from the foundations upward, and design solutions with an awareness of system constraints.

This distinction becomes especially urgent now that AI can generate code with a level of competence that already surpasses most junior developers. AI can write the code. What it cannot do is decide what should be built, why it should exist, which architecture makes sense, or what kind of user experience matters. System design, choosing the right data structures, deciding which data is worth collecting based on future business problems that remains deeply human territory.

Malan also has historical perspective. He lived through the dot-com crash, the blockchain boom, the hype around AR/VR and Google Glass. Each time, the tech world reacted dramatically. And each time, the field eventually stabilized, with no shortage of new problems to solve. The world only becomes more technological, not less. What changes is the nature of human work within that world.

How AI Has Changed Computing Education

David Malan teaches an introductory computer science course at the exact moment when AI tools can complete problem sets better than most of his students. And he has been thinking about this since before ChatGPT became mainstream. GitHub Copilot came first with autocomplete.

His response operates on several layers, and they are worth separating.

The first layer is creating a differentiated environment. CS50 has its own AI assistant which is intentionally designed to be less useful than ChatGPT. It does not solve the problem for students; it guides them toward the solution. The line is clear: students can use cs50.ai as much as they want, but the moment they open ChatGPT, they knowingly cross a boundary. Instead of trying to detect cheating after the fact, the course redesigns the environment so that cheating requires a deliberate decision rather than simply following the path of least resistance.

The second layer is honesty about the limits of detection. Malan acknowledges that they are not necessarily seeing more cases of academic dishonesty, but what has changed is the ability to prove it. Previously, instructors could point to a URL and say: “this code was copied from here.” AI-generated code no longer comes from a traceable source, it comes from the synthesis of everything. What remains are indirect signals: inconsistencies with a student’s previous work, unusually sophisticated implementations, or perhaps the most revealing detail of all: the code solves last year’s problem set instead of this year’s.

The third layer is the most important one long term: what does it mean to learn programming when AI can already program? Malan mentions that the very same morning he had been prototyping something with Claude. The collaboration was useful precisely because he already knew enough to recognize that the generated solution was 90% correct and to have the technical conversation necessary to fix the remaining 10%. That remaining 10% is exactly where first-principles knowledge becomes indispensable. Without it, “mostly correct” code becomes production software containing subtle bugs nobody understands.

In Malan’s view, AI automates the least interesting parts of the job: writing unit tests, reading unfamiliar API documentation, implementing boilerplate. What it does not replace is design: deciding what to build, for whom, under which constraints, and with which system architecture in mind for future needs.

Even if software implementation itself became fully automated, problem solving and systems thinking would still matter. Those are precisely the cognitive skills that allow humans to collaborate effectively with AI rather than merely consume its outputs.

There is also a broader psychological effect on enrollment. Fewer students are entering computer science, and the reasons are worth distinguishing. The fear that AI will devalue their skills is the obvious headline, but Malan points out that the contraction of the job market specifically the disappearance of junior engineering roles preceded the AI wave and is independent of it. Companies simply stopped recruiting entry-level engineers before AI became the dominant conversation. When the AI wave arrived, it confirmed fears that were already forming. The two causes are related but not the same, and collapsing them into one obscures what is actually happening in the market.

Malan’s response is that this is simply another cycle in a history he has already seen repeat itself: the dot-com crash, blockchain hype, AR/VR enthusiasm. Each time, the field absorbed the disruption and continued expanding because the world keeps becoming more technological, not less.

Other Topics

Beyond these three themes, Malan also recognizes the value of institutions like Harvard in terms of networks and signaling, while arguing that technical learning can sometimes be equally effective online thanks to tools that let students control their own pace.

On pedagogy and learning difficulties, he notes that pointers in C remain one of the hardest concepts for students. He frames struggle not as evidence of inability, but as a natural and necessary part of learning. When students fail to understand something the first time, they should approach it from different angles, search for alternative explanations, or consult different instructors and resources.

At the same time, he also introduces an important nuance: if someone repeatedly struggles without ever finding satisfaction or curiosity in the material, it may be worth questioning whether the field itself genuinely interests them.

Toward the end of the conversation, Malan discusses the future of CS50, reaffirming his commitment to openness and free access while expressing interest in expanding the curriculum toward broader problem solving, mathematics, and even the humanities in order to help students build stronger intellectual foundations.

Finally, reflecting on his own career, he offers students one central piece of advice: explore more broadly and avoid reducing university life to simply completing academic requirements. One of his few regrets is not spending more time pursuing professional experiences outside academia before fully committing to it.

As for book recommendations, he mentions:

and the For Dummies series.

I hope you enjoyed this summary. The production quality of this episode was especially impressive, thanks to the CS50 team’s expertise. It was filmed at the Regent Theatre in Arlington, Massachusetts, home of the Fifty Foundation and the team behind CS50. I invite you to watch the full episode. I think it’s a great conversation to savor slowly.

Taste vs. Criterion/Judgement: The Definitive Take

After months of thinking about the difference between criterion/judgement and taste, this post by José Luis Antúnez feels like the missing piece that finally made it click for me.

With his permission, I’m just going to translate it literally into English, because I think it’s brilliant and there’s really no better way to say it:

For someone without formal training in a discipline, taste is autobiographical. It’s a passive accumulation of cravings shaped by where we grew up, what we’ve consumed, and what happens to be trendy. Challenging an “I like it” risks making someone you care about feel as though their identity is being rejected.

Judgment, on the other hand, is historiographical. It’s an active accumulation of reasons that can eventually produce an immediate response we call instinct. It’s knowledge trained over years, nourished by principles, successes, mistakes, and even biases (no one escapes them), all of which shape a point of view that can be argued according to needs, contexts, budgets, and constraints.

If you lead teams, avoid saying “I don’t like it” without explanation. Professional judgment doesn’t eliminate subjectivity entirely, but it does discipline it. We should learn to reason through what we feel without falling into self-deception.

The “Flaky Compiler” Metaphor

Bryan Cantrill and Adam Leventhal invited Brown University professors Kathi Fisler and Shriram Krishnamurthi onto Oxide and Friends to discuss AI in computer science education. The conversation focused on an experimental spring course built around agentic programming, designed to expose students to LLM-powered “flaky compiler” workflows. The goal was to let students experience failures firsthand, develop testing and type-based safeguards, and help faculty rethink introductory CS curricula.

Their motivation was that tools like Claude have already made it impossible to teach computer science the same way it was taught before. Rather than resist that reality, they wanted to collect real data on how students actually use these systems and experiment with new teaching approaches ahead of the Fall 2026 semester. They also pointed to a major “expert blind spot”: instructors often have very little visibility into what students already understand or misunderstand about LLMs.

To explore this, they launched a small pilot course with 20 students selected from 80 applicants. Participants were required to have at least one semester of programming experience. The idea was not to teach students how to code from scratch, but to investigate how far they could go when working with programming agents as collaborators.

Shriram described agentic systems as a kind of “flaky compiler”: incredibly powerful, but fundamentally non-deterministic. The course was therefore designed to teach students how to get reliable results from unreliable systems by leaning heavily on core software engineering principles. Students learned to write precise specifications, design robust automated tests, use types to make assumptions and interfaces explicit, and rely on proper libraries and constraint solvers instead of naive AI-generated solutions. Verification, code review, iterative refinement, and engineering discipline became core practices.

“Flakiness” itself is not new. Software engineers already deal with flaky systems, flaky coworkers, and even flaky versions of themselves. Agentic systems simply introduce another source of unpredictability that has to be managed responsibly. The class embraced this philosophy by operating almost like a design studio: iterative, experimental, and intentionally messy “like assembling a plane mid-flight.” Students were encouraged to use agentic coding tools, run into failures, reflect on them, and then learn the engineering concepts needed to address those failures.

The instructors shared several assignments from the experiment:

Tetris sequence: Students first used Claude to generate a working Tetris implementation in a single shot. However, when they were asked to build variants such as reversing gravity or dynamically toggling it the limitations of the generated solutions quickly became obvious. This pushed students to think more deeply about representation, architecture, testing, and design.

Data analysis assignment: Students started with a small CSV dataset and later scaled up to much larger datasets. The generated solutions often failed to scale properly, creating opportunities to teach databases, performance considerations, and testing strategies.

Requirements checker project: Students built a checker for Brown CS degree requirements, exposing ambiguities in the specifications and highlighting the complexity of grading infrastructure and formalized requirements.

The teaching approach consistently emphasized types as a constraining and reviewable artifact. Instructors encouraged “types-first” workflows in TypeScript so that prompts and generated code would remain understandable, constrained, and easier to review.

A particularly interesting aspect of the course was its emphasis on peer critique, borrowing from the “crit” model commonly used in design disciplines.

Assessment relied heavily on testing plans, design documents, peer critiques, code reviews, and reflection journals written by the students themselves rather than generated by LLMs.

Students initially reacted with amazement at what LLMs could produce especially after seeing one-shot Tetris implementations but that excitement quickly gave way to a deeper awareness of the systems’ unreliability once the requirements became more complex. Many students reported feeling conflicted about relying too heavily on LLMs for learning, and some noticed that excessive dependence reduced their own engagement and understanding. Usage patterns varied widely: some students leaned heavily on the tools, while others deliberately avoided them on principle.

One surprising outcome involved API usage and costs. The instructors had previously purchased a substantial amount of API credits for another course around $2,500 worth but actual student usage ended up being far lower than expected. This challenged the assumption that students would simply outsource everything to the model whenever possible.

The key thing is that students are like, “Look, I’m taking this course to learn something. If I outsource everything to GPT, I’m not really going to learn the thing I came here to learn.

The discussion also touched on faculty concerns about cheating. The instructors acknowledged the issue but argued that students who rely too heavily on AI ultimately undermine their own education. In practice, many students still wanted to genuinely learn and were hesitant to fully hand over the learning process to AI systems.

It’s also about the job market and everything going on right now. Students are really worried that they need to look good on paper or they’re not going to get hired. So there’s a lot of anxiety around grades. And if you know some of your classmates are using LLMs to get assignments done while you’re doing them on your own, you start wondering: if that lowers your grade, what are you supposed to do for your career? That’s definitely something we hear from students too.

Another major debate centered on when and how agentic tools should be introduced into the CS curriculum. Some students argued that novices should not have access to these tools early on, while the instructors explored several instructional models, ranging from fully agentic courses to hybrid approaches where AI use is more constrained. At the same time, they saw significant upside: because routine coding tasks can now be generated quickly, instructors may be able to introduce higher-level software engineering concepts such as testing, usable security, distributed systems, and multi-user design much earlier in the curriculum.

To me, the whole point of undergraduate education is, in some sense, to break you down and rebuild you. – Bryan Cantrill

The conversation also explored broader implications beyond CS majors. Students who take only a single programming course may become dangerously overconfident if they are taught to trust LLM-generated output without learning the principles of verification and testing. The instructors worried that if computer science departments fail to teach rigorous, and trustworthy AI-assisted development practices, that gap could instead be filled by youtubers lacking sufficient engineering rigor.

We need to communicate to students what learning actually means.

Operationally, the pilot course intentionally kept enrollment small and relied on highly interactive teaching methods rather than heavy automation. Students participated in peer reviews, kept reflection journals, developed testing plans, completed live 20-minute code review finals, and engaged in frequent day-to-day interactions with instructors.

By the end of the course, many students reported a deeper appreciation for software design, testing, and the importance of questioning agent outputs rather than trusting them blindly. Some even became more cautious about using LLMs after the experience. The instructors plan to publish their findings, redesign future courses, and launch additional pilot classes for beginners to better understand how agentic tools can be introduced safely and effectively.

The hosts closed the discussion by praising Brown’s willingness to experiment, its institutional flexibility, and the trust it places in students. The overall tone was optimistic: computer science education is changing rapidly, and universities have a responsibility not only to acknowledge that shift but also to teach students how to use these systems critically, rigorously, and responsibly.

What happens if the software survives, but no one remembers why it survives?

I really appreciate Camilo’s clarity in both his explanations and his choice of words. You can clearly sense his academic and well-read background.

This episode explores a concept that was new to me, but incredibly thought-provoking: epistemic drift, the gradual degradation of the software’s underlying justification. AI makes this problem even more acute because it’s becoming harder and harder to capture those traces as we keep adding features with agents. Those paths of reasoning get lost, and that’s exactly the “justification” Camilo is talking about.

Watch the episode to hear him explain it in depth. Here’s the short version: imagine six features that all still preserve their functionality, but the reasoning behind why something works or how we arrived at that system in the first place can no longer be reconstructed.

The danger is ending up forced into a rewrite because the code still works, yet we no longer understand why or how. And this goes beyond technical debt or code aging: epistemic drift can happen even when everything appears to be working correctly.

Camilo also ends on a more optimistic note about agents: they can help capture traceability (inputs, outputs, steps, and interactions) and give us better observability tools.

To me, this is a wake-up call for AI companies: an agent shouldn’t just satisfy requirements, it should also preserve the justification behind the system.

Could we lose control over the software we’re building?

🔍 Resources for Learning CS

→ Some thoughts on working with AI models

Some Claude content from Eugene Yan. Really good. Thanks for putting it out there. Learned a few things: context as infra, taste as config, verification for autonomy, scaling via delegation, and closing the loop.

🔍 Resources for Teaching CS

→ In-class writing instead of a semester-long paper

Here is a thoughtful episode about writing that focuses on its role in supporting thinking rather than simply producing an artifact. It explores how that role should shape the design of student writing, with more of the work happening in class as a supported, iterative process rather than primarily through a term-long paper. I hope this podcast episode makes you think about whether your class should have a semester-long group research project or instead focus on more targeted mini-projects.

→ ACM Code of Ethics and Professional Conduct

Great read. Give it 20 minutes. Summer can be a great time to think about these big ethical questions, ones that can't stay as abstract concepts but must become a daily practice. Here's the PDF version.

→ MAP-CS (Mission Aligned Programs for Computer Science)

A multi-institution collaboration supporting mission-aligned computer science programs at liberal arts institutions, with support from the U.S. National Science Foundation. More info here.

🦄 Quick bytes from the industry

→ Exploring with agents

I enjoyed this conversation between Adam Stacoviak and Amelia Wattenberger, designer, data visualization veteran, former GitHub Next member, and now working on Intent at Augment Code.

They discuss the idea that the last 30% of any software project may soon become the hardest part of development. Amelia argues that software engineers are experiencing an identity shift as AI agents increasingly take over implementation tasks. The conversation also explores the redesign of developer tooling in this agent-first world, the evolution from autocomplete to chat to CLI and back to UI, why Intent treats the workspace rather than the chat thread as the core primitive, the tradeoffs between one-worktree-per-agent versus one-worktree-per-task, and why prototyping has become easier while finishing products has become harder.

→ What jobs are AI jobs?

Another great conversation between Benedict Evans and Toni Cowan-Brown on Another Podcast, especially the part about the human element and why jobs like consulting aren’t really AI jobs.

Toni argues that as AI becomes increasingly powerful and eventually taken for granted, the real differentiator will shift from the technology itself to the human element behind its implementation. The real value will come from knowing how to apply AI effectively to real-world problems. Rather than replacing consulting and expertise, AI may actually make trusted human judgment even more valuable people who can turn technology into strategy, execution, and decisions tailored to specific contexts.

🌎 Computing Education Community

Last day for ITiCSE 2026 early bird registration.

ITiCSE is looking for volunteers for the Conference Committee and conference host teams.

Participants Needed for a Study on Essay Writing and ChatGPT (shared by Andrew Jelson)

UR2PhD opens summer programs for undergraduate researchers and graduate mentors, including synchronous research training courses and an asynchronous pre-research experience designed to build foundational research skills and prepare students for graduate study.

Estrella Mountain Community College is hiring a full-time Artificial Intelligence & Machine Learning faculty member for Fall 2026 (shared by Jim Nichols).

ICCE 2026 (Nov. 30–Dec. 4, Christchurch, New Zealand) is now inviting submissions across seven sub-conferences in computing and education research.

AccessComputing is hosting a book club on Digital Accessibility Ethics (May 21–June 11), focused on disability inclusion, AI, cybersecurity, design, and accessibility in tech through discussion-based sessions (shared by Brianna Blaser).

CCSC Northwestern 2026 (Oct. 9–10, Whitman College, Washington) is accepting submissions for papers, tutorials, panels, workshops, and student posters in computing and CS education. Deadline: June 30 (shared by John Stratton).

🤔 Thought(s) For You to Ponder…

David Alayón doesn’t watch traditional TV. He goes straight to specific sources and has meaningful conversations; he ends up hearing about the important stuff through other people or social media.

One issue related to gambling that I’ve discussed with many young people is self-control, especially how weak it can be among those who are constantly connected and heavily attached to their phones. Their ability to regulate impulses is still developing, which can increase the risk. Gambling is particularly appealing to this age group.

ChatGPT and Claude already “teach” languages better than they did two years ago. Duolingo has branding and gamification, but…

I noticed Jennifer A. Frey sounded a little frustrated with Tulsa in her interview on the Evangelization & Culture Podcast. I’m happy for her and for the UVA students and faculty. Great hire!

I recently discovered the work of Justin Parpan, an art director in the animation industry and a design professor at USC, who creates incredibly textured, semi-abstract digital paintings. Those color palettes are amazing! He also has a Substack where he shares the processes he uses to combine digital and analog textures to achieve the final look and feel. The artwork is outstanding on its own, so getting this level of insight into the creative process is the icing on the cake.

This study showed that AI without ever seeing the patient and using only the same clinical information a doctor receives during triage was more accurate than human physicians at determining the urgency level. Of course, AI does not replace physical or human interaction. AI cannot examine the patient or speak with family members. One potential risk is that it could become a “first opinion” that physicians accept without critically evaluating it themselves. Hospitals are already offering courses and tools focused on AI applications in medicine. Several different programs already exist, and new applications are being developed to predict things like hospital length of stay or illness severity, which is especially useful for bed management and hospital capacity planning.

I disagree with Alma Guillermoprieto (the first guest) on some aspects of her geopolitical analysis, but I really liked the metaphor she uses on the cover of “Esta improbable tierra prometida” to talk about Latin America: those people in the desert who got off a bus because it broke down, yet despite the fact that it’s probably the fifth time that bus has broken down, they still keep pushing it. Despite everything, as Alma also says in this segment, we have to keep going; we have to fix what we have and try again because it’s worth it. It’s a region that has never stopped producing beauty, creativity, art, and joy, and its people are masters of creativity. The world needs that. What happens with all those external enemies in Latin America is unfortunate, but they are still free on the inside.

Carlos Pérez Laporta is the author of Nacidos para vivir. They talk about vocation, fully enjoying life, and unity of life. They recommend The Adventure of Being Human by Piñero, Open Night by Hugo Mujica, Confessions by Saint Augustine, La Grazia by Sorrentino, and The Secret Life of Words by Coixet.

I found this interview with Fr. Mariano Fazio interesting.

Through the great books — the classics — if someone speaks to me about truth, goodness, and beauty; if they can give me the tools to distinguish good from evil, beauty from ugliness, truth from falsehood… that’s also a very natural way of transmitting the Gospel. Good literature conveys what makes the human soul come alive.

If someone jumps straight into reading The Brothers Karamazov, Crime and Punishment, or War and Peace, they may end up discouraged because those books are difficult and very long. But if you start with a book that’s more approachable, you gradually develop a taste for it.

I think we need to carry out, so to speak, an ‘apostolate’ of reading. Those of us who read should encourage young people by saying, ‘Why don’t you try this book, or that one?’ and you’ll see how it opens up new horizons for you.

Books he mentions:

MUBI Encuentros is back with a new season. They bring together two people from the film world to talk. I really liked that the first episode focused on producers, because in the end they’re the ones who decide which films actually get made. Something Marisa Fernández Armenteros said has been stuck in my head. She mentioned that she misses having metrics that could help guide her work more effectively, knowing who’s watching those films, their ages, what kinds of movies they like, and so on.

Can’t wait to listen to the new album from the Spanish band Arde Bogotá. Aimar Bretos interviewed the band in his interview segment on Hora 25.

A beautiful meditation in spanish by an Opus Dei priest reflecting on the few words spoken by Mary in the Gospel, trying to draw out possible paths and choices for our lives as Christians almost as a kind of roadmap for Christian life.

Listening to this discussion between Mila Coco and Juan Rodríguez Talavera about the work behind creating good content really resonated with me. Creating great content means constantly exposing your mind to interesting ideas. You have to consume other high-quality content that performs well, do a bit of reverse engineering to understand why it works, think about how to adapt those ideas to your own context, and figure out how to package them for each platform. It’s a continuous learning process.

Mila also made me think about the email content I consume and create. Getting attention and staying relevant in someone’s inbox has a lot to do with the relationship you build with your audience, how much they enjoy reading you, how you’ve positioned yourself, and whether you can communicate ideas in an entertaining and engaging way.

I really like the reflections in this app. It lets you create a prayer journal inspired by the daily readings.

I don’t know and it gives me food for thought:

Study conducted in January and February 2026. Coordinated by: José María Díaz-Dorronsoro. Promoted by: The Pontifical University of the Holy Cross. Sample: 9,000 young people (ages 18–29). 9 countries (Argentina, Brazil, the Philippines, Italy, Kenya, Mexico, the United Kingdom, Spain, and the United States). A redefinition of the concepts of work, well-being, and personal fulfillment among Generation Z and Millennials.

It’s true that some companies are going to need fewer employees, and that white-collar jobs (engineers, designers, strategists), and other office-based roles are among the ones being hit hardest. You can already see it happening at tech consulting firms like Capgemini or Inetum. But this situation also made me think about roles that didn’t even exist a few years ago and are now being created, like ML Researcher or AI Engineer. Maybe newly graduated professionals, building on strong core fundamentals, will increasingly need to focus on getting the most out of these agents and models: understanding the business context, figuring out how to turn models into useful tools for company data, how to fine-tune and deploy them effectively, and even how to supervise and evaluate their output. Because a lot of the time, models produce results without people fully understanding where those outputs actually come from. And companies operating in high-risk environments, for example, need that process to be tightly controlled.

📌 Research Corner

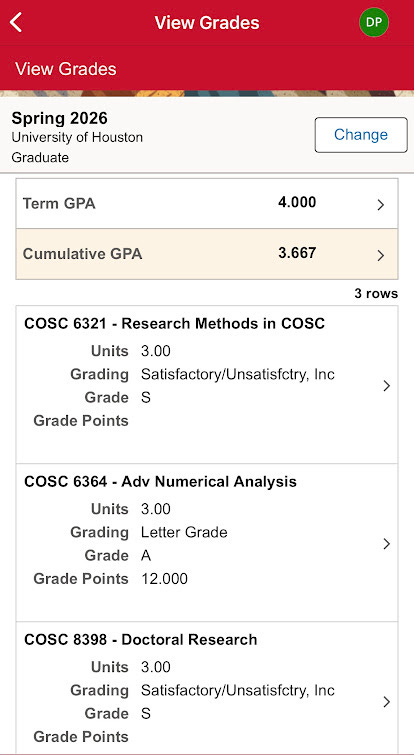

Got my spring grades back. Still surviving the PhD program.

I’ve been working hard on the ITiCSE WG. Here are a few interesting papers I read this week related to our SLR:

🪁 Leisure Line

All the best with your software engineering interviews, Nebil. I’m sure great opportunities are coming your way!

Last week, I visited Austin for the first time. A UT professor showed me around the UT Austin campus, and I wrapped up the trip with a shake at Amy’s and a visit to the Texas Capitol. Sharing a few photos from the day. The terrace belongs to my friend’s house, where we also had lunch from Pizza di Roma (highly recommend it!).

One of my weird obsessions, I guess, is being the MC at home whenever there’s a birthday celebration. It’s easy to tell which sport fits the latest birthday person. The result of the decorations in one picture. Impossible to summarize the rest of the show. It was hilarious.

First time flying with KLM, and I’ve been really impressed so far: on-time flights, great service, solid catering, and very smooth baggage coordination. The connection through AMS was super easy too. I’ll definitely fly this route again in the future.

📖📺🍿 Currently Reading, Watching, Listening

Just imagine all the auditions they must have gone through before making it to Ragtime. I love that Tiny Desk helps spotlight and give more visibility to people working in Broadway. Most of them are practically unknown to the general public, even though they have incredible talent.

What an unpleasant experience watching The Last Stop in Yuma County. I didn’t like how the story developed, but it did make me reflect on the consequences of sin.

Powerful documentary on the life of Miguel Gil on 3Cat. Some of the images he captured while covering conflicts helped change the course of history. He was killed in Sierra Leone in 2000. In the documentary, his former colleague David Guttenfelder recalls a difficult moment they experienced together in Zaire in 1997. Miguel was holding a camera in one hand while trying to help people with the other.

Madrid is already counting down the days until Pope Leo XIV’s visit in June. I was especially struck by the courageous and authentic testimony of the city’s mayor, José Luis Martínez-Almeida, in this interview with Father Ignacio Amorós and Pablo Velasco. I listened to it closely, and below I’ve gathered the passages that resonated with me most, doing my best to translate his words into English.

Reading the Gospel every day is what gives you the first step from an inherited faith to a lived faith.

From his parents he learned: consistent hard work, effort, commitment, values, passion, a sense of humor, and not taking yourself too seriously.

What AI must not do is undermine our natural intelligence: understanding, knowledge, memory — the very things that allow us to think for ourselves. I think it’s fantastic technology, but it also comes with risks.

I’ve never hidden the fact that I’m a practicing Catholic. I’m sure many of the people who vote for me are not, I’m convinced of that; but they would rather I be recognizable and transparent about who I am and what my values are than soften or disguise what I believe. I think each of us has to act in accordance with who we are, our beliefs, our intellectual background, and our spiritual baggage.

Respect is the essence of living together peacefully; it means being able to coexist despite our differences. The deterioration of coexistence begins when people start labeling and canceling those who think differently, believing they no longer have the right to express an opinion.

I love the chosen motto: “Lift up your eyes.” More than ever in the times we live in, I think it’s essential that we learn to lift our gaze — not remain fixed on the ground or attached only to a worldly perspective, but raise our eyes to understand what is truly important and transcendent, recognize it clearly, and act accordingly.

There’s going to be a Corpus Christi procession with the Holy Father through the streets of Madrid!

In terms of the city itself — its image, international standing, and global projection — it’s going to be extraordinary.

Seeing the Pope for four days, the vigil, the Mass, the events he will celebrate, the Corpus Christi procession, and a city fully devoted to this visit will be something truly exceptional. I’m sure grace will be poured out over all of Madrid. He is a moral and spiritual compass that benefits all of us, and I’m convinced this visit will bear abundant fruit.

The three pieces of advice the Pope gave him:

Be courageous. Don’t hide. Don’t lower your head. You are Catholic and you are in public life.

Never give up your principles.

The ultimate limit to any decision is respect for human life and the dignity of the person.

In Madrid there are realities that are not so visible or admirable that, were it not for the Church, Cáritas, and the Church’s charitable work, would hardly be sustained by anyone in this city. Many people want to reduce the Church to an NGO. But that charitable work is born from love for Christ. It is precisely the spiritual dimension of the Church that makes such immense service possible. And those who stand beside the most vulnerable — those whom nobody wants to see or be with — do so precisely out of love for Christ.

—What makes you happy today?

—My wife and my son.

Pablo Velasco: the best thing you can give a child is to love your wife, for him to see how you love his mother.

If you ask me what takes away my joy, I’d say that sometimes it’s not being able to make the right decision. And also making decisions knowing they may not turn out the way you hoped.

But you have to face it. I think about that too — it happens to all of us; we’re human.

What we cannot do is refuse to correct ourselves out of fear. If you make a bad decision, if you mess up, you correct it and acknowledge it. And people understand that; there’s no problem with it. You don’t have to pretend to be infallible.

💬 Quotable

In the twilight of life, we will be judged on love alone.

― St. John of the Cross

🌐 Cool things from around the internet

A collection of links to stuff I think are worth sharing.

🔗 Miniatur Wunderland — hours of craftsmanship, thousands of details.

🔗 im Water — wow, such a cool idea and design.

🔗 Sean Penn in The Secret Life of Walter Mitty — the snow leopard scene is still one of those moments that makes the world stop for a second. That reflection on living in the present, in the moment…

Issue #44 of Computing Education Things was written while listening to:

🔗 Quick Links

🎧 Listen to Computing Education Things Podcast

💌 Let’s talk: I’m on LinkedIn or Instagram

If you’re enjoying Computing Education Things, please like, comment, or share this post! You can also support this work through Buy Me A Coffee. And if you’re finding value in the newsletter, consider forwarding it to a friend, colleague, or family member to help the community keep growing. Thanks for reading!